2,321 views

Deepfakes and the Future of Trust on the Internet

Mar 4, 2026

Dre K

CTO

Article

Deepfake technology uses artificial intelligence to create realistic videos or audio recordings that appear to show real people saying or doing things they never actually did. What once required advanced visual effects studios can now be done using widely available AI tools.

The technology works by training machine learning models on large datasets of images, video, and audio recordings. These models learn patterns in facial expressions, voice tones, and movements, allowing them to generate synthetic media that closely resembles real individuals.

Deepfakes have already been used in entertainment and film production, but they have also been used to create misleading content involving political figures, celebrities, and public officials.

One of the biggest concerns surrounding deepfakes is their potential to manipulate public opinion. A fabricated video of a public figure making controversial statements could spread rapidly before fact checkers or journalists have time to verify its authenticity.

Researchers at Stanford University and the Brookings Institution have warned that synthetic media could become a powerful tool for disinformation campaigns. As AI improves, deepfakes may become more convincing and more difficult to detect.

Another concern is the misuse of personal identity. Individuals may find their likeness used in manipulated media without consent, which raises ethical and legal questions about privacy and digital identity.

Technology companies and research organizations are actively developing tools to detect manipulated media. These tools analyze subtle inconsistencies in images, video frames, lighting patterns, and audio signals to identify potential signs of artificial generation.

Despite these advances, detection remains an ongoing challenge because generative AI models are constantly improving.

For internet users, understanding the existence of deepfakes is the first step toward responsible media consumption. Questioning the source of viral content and relying on credible information sources can help reduce the impact of manipulated media.

As synthetic media becomes more sophisticated, the future of the internet will depend heavily on transparency, verification systems, and responsible platform design.

Why RealScroll Exists

The rise of artificial intelligence has made it harder than ever to know what is real online. Images, videos, and audio can now be generated in seconds, often appearing indistinguishable from real world events.

RealScroll was created to bring transparency back to social media.

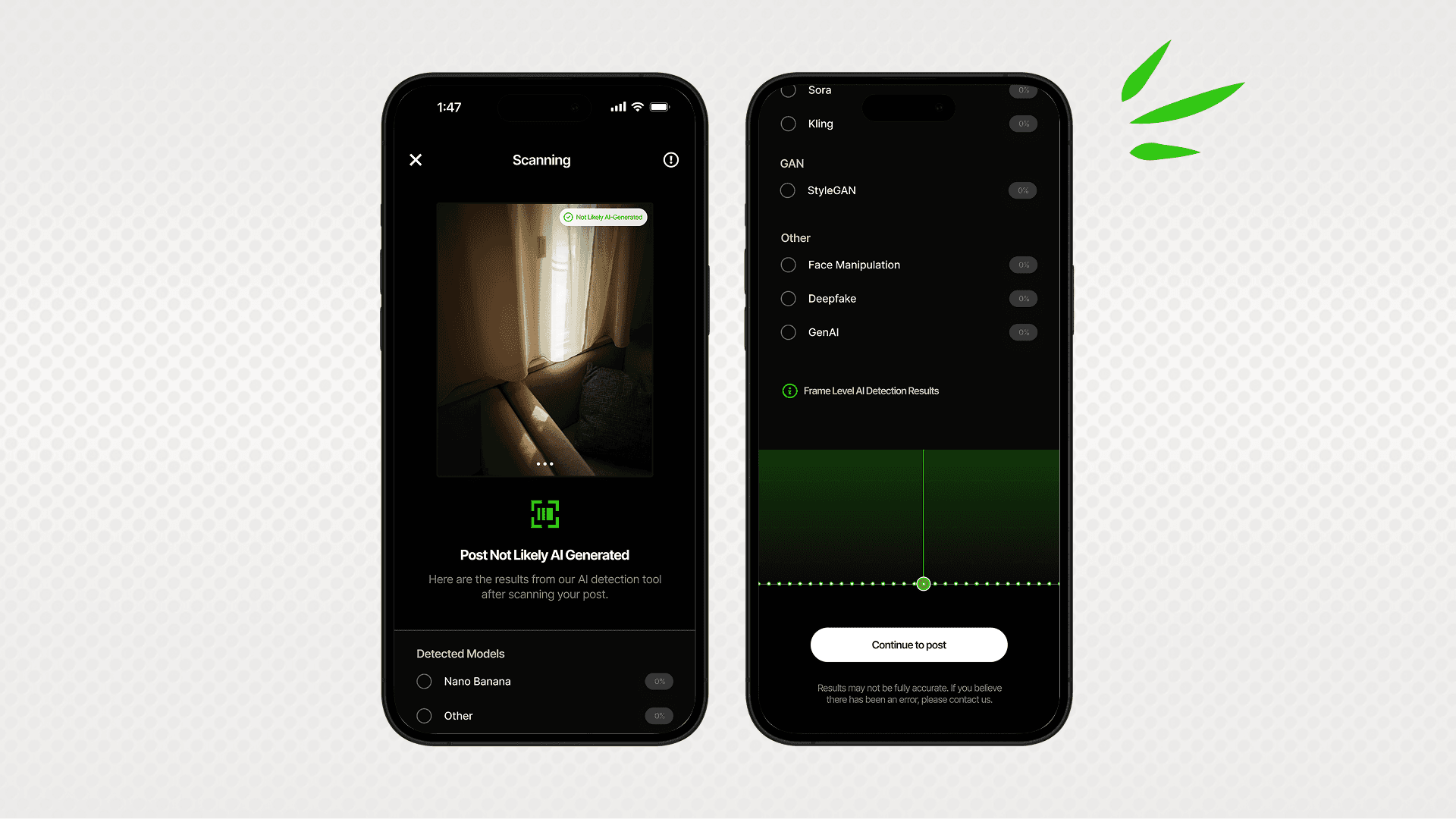

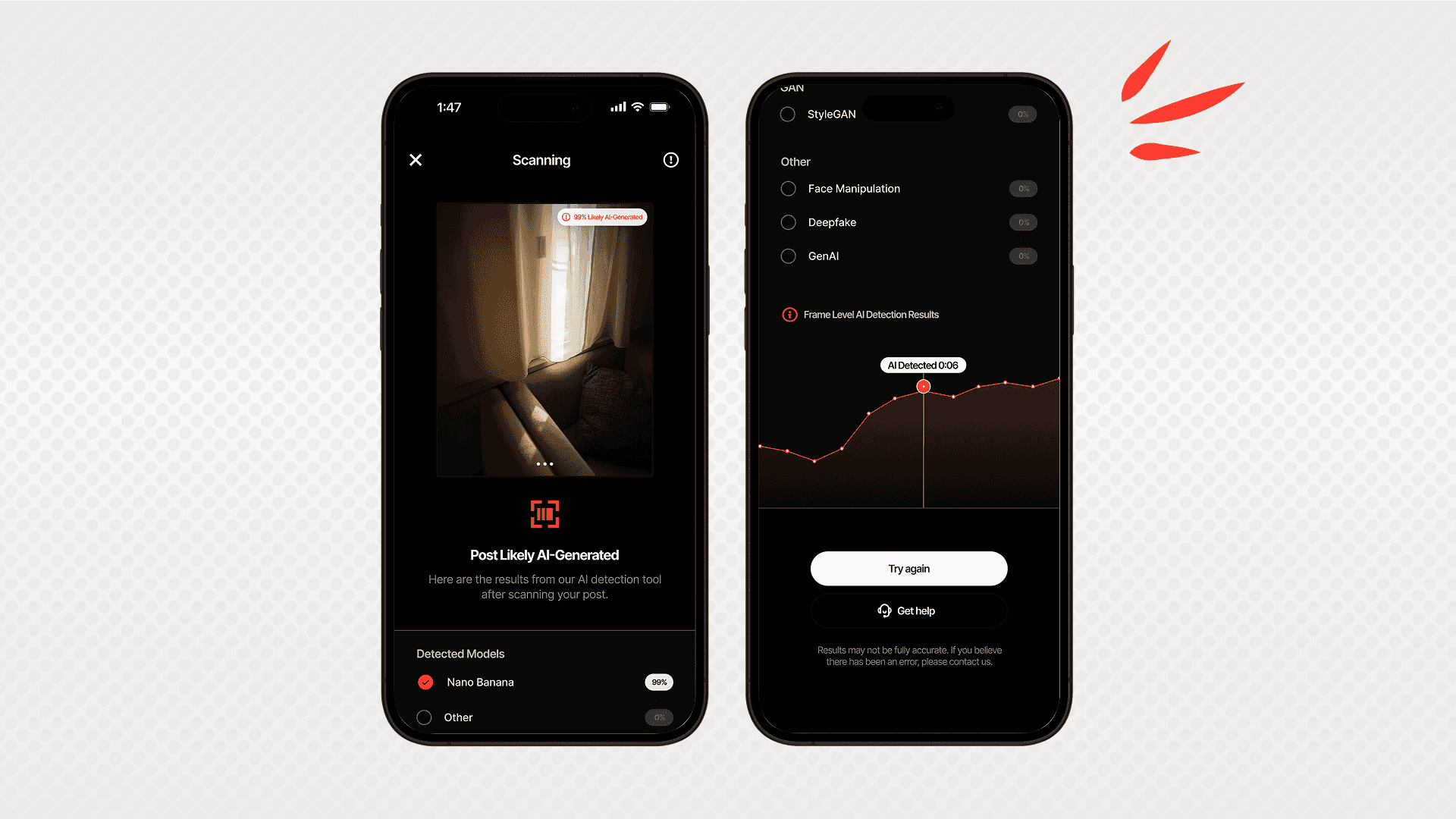

Our platform analyzes uploaded media using detection technologies designed to identify possible indicators of artificial generation or manipulation. When content is reviewed, users can see contextual signals that help them better understand what they are viewing.

The goal is not to control what people share, but to give the community more clarity.

Instead of guessing whether a photo or video might be artificial, RealScroll provides tools that help users make more informed decisions about the content they see.

In a digital world filled with uncertainty, transparency matters more than ever.

RealScroll is built to help make social media feel real again.